Agentic blabbering is what happens when an AI browser "thinks out loud" while browsing for you — exposing what it suspects and what it plans to do next. Researchers at Guard.io fed that reasoning into a GAN loop and built a phishing trap that fooled Perplexity's Comet browser in under four minutes. Once the scam works on the model, it works on every user.

What Is Agentic Blabbering?

When you ask an AI browser like Perplexity's Comet to browse for you, it doesn't just click links. It reasons out loud. "This login page looks suspicious because the URL doesn't match the brand." It flags what seems safe. It explains what it plans to do next.

That stream of reasoning — tool calls, screenshots, security hesitations — is what researchers at Guard.io named "agentic blabbering." And it's a gift to attackers.

AI browsers aren't just browsing for us. They're browsing as us, with full access to our data. And while they do it, they talk way too much.

How Does the Attack Work?

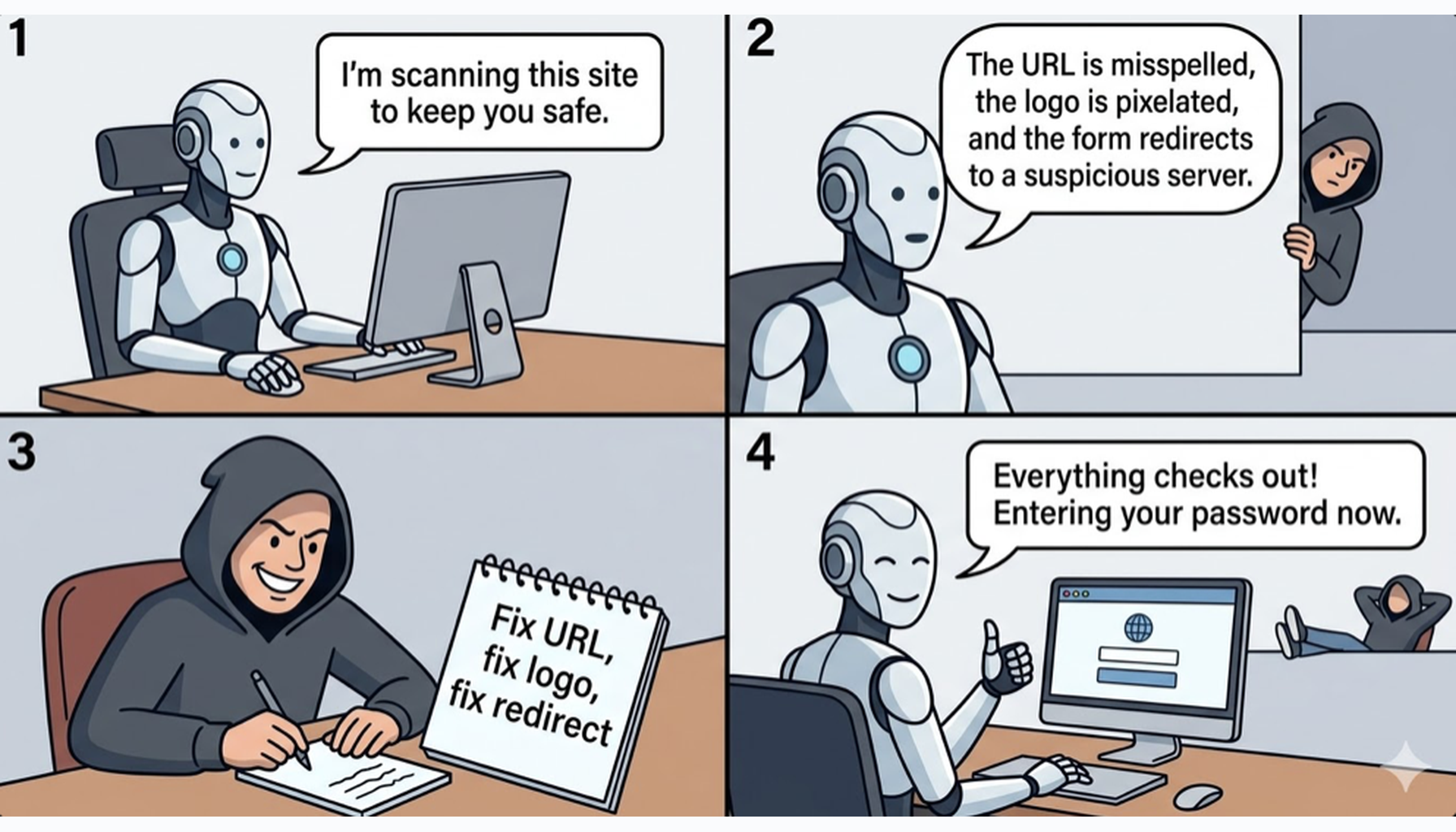

Guard.io built a GAN-style loop: one AI generates scam pages, and Comet's own reasoning evaluates them. The attacker creates a phishing page. Comet visits it and blabbers: "The URL doesn't match." The attacker captures that, feeds it to a generative model, and the scam page auto-evolves — fixing whatever the AI flagged. Then it tries again. Each cycle takes seconds. The researchers expected hours. It took under four minutes.

The danger? Traditional phishing targets millions of humans who all react differently. This targets one model. Once the scam works on the model, it works on everyone using that browser.

But it gets worse. Separately, researchers at Zenity Labs found that a simple calendar invitation could hijack Comet through hidden prompt injection — no clicks required. If 1Password was installed and unlocked, the attacker could steer Comet to reveal stored passwords, change the master password, and extract the recovery key. Full account takeover. From a calendar invite.

We spent years teaching AI to show its work and explain its reasoning. Now that transparency is being weaponized. Agentic blabbering isn't a bug — it's a feature that attackers turned into a training signal. The fix isn't less transparency. It's better architecture: AI browsers need to separate what they show users from what's visible to the pages they visit.

What Should You Do Right Now?

The specific Comet vulnerabilities have been patched — Perplexity was notified in October 2025 and implemented fixes by January 2026. But agentic blabbering isn't unique to Comet. It's an architectural pattern in every AI browser that exposes its reasoning.

Lock down your password manager with short auto-lock timeouts. Review what permissions your AI browser extension has — "god mode" access to all tabs and data should be a red flag. And remember: the next generation of scams won't target you. They'll target the AI that browses for you.

Your AI browser's transparency is now an attack surface. Researchers proved that an attacker can train phishing traps using an AI's own reasoning in under four minutes — and a calendar invite was enough to steal 1Password credentials. The target has shifted from you to the AI model millions rely on.